Assessing Individuals

A critical aspect of Enabling Individuals to Perform Systems Engineering is the ability to fairly assess individuals. This article describes how to assess systems engineering (SE) competencies needed by, actual SE competencies of, and SE performance of individuals.

Assessing Competency Needs

If an organization wants to develop a customized competency model, an initial decision is “make versus buy.” If there is an existing SE competency model that fits the organization's context and purpose, the organization might want to use the existing SE competency model directly. If existing models must be tailored or a new SE competency model developed, the organization should first understand its context.

Determining Context

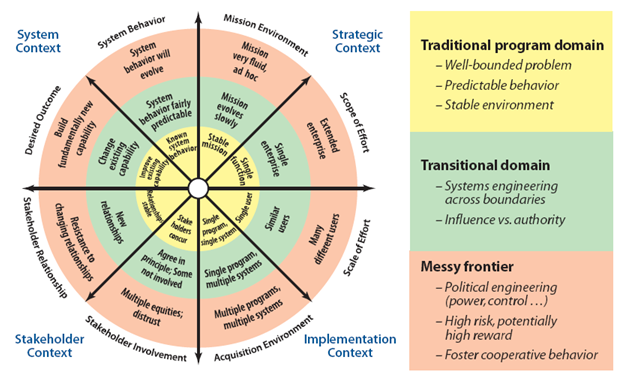

Prior to understanding what SE competencies are needed, it is important for an organization to examine the situation in which it is embedded, including environment, history, and strategy. As Figure 1 shows, MITRE has developed a framework characterizing different levels of systems complexity. (MITRE 2007, 1-12) This framework may help an organization identify which competencies are needed. An organization working primarily in the “traditional program domain” may need to emphasize a different set of competencies than an organization working primarily in the “messy frontier.” If an organization seeks to improve existing capabilities in one area, extensive technical knowledge in that specific area might be very important. For example, if stakeholder involvement is characterized by multiple equities and distrust, rather than collaboration and concurrence, a higher level of competency in being able to balance stakeholder requirements might be needed. If the organization's desired outcome builds a fundamentally new capability, technical knowledge in a broader set of areas might be useful.

Figure 1 MITRE Enterprise Systems Engineering Framework

In addition, an organization might consider both its current situation and its forward strategy. For example, if an organization has previously worked in a traditional systems engineering context (MITRE 2007) but has a strategy to transition into enterprise systems engineering (ESE) work in the future, that organization might want to develop a competency model both for what was important in the traditional SE context and for what will be required for ESE work. This would also hold true for an organization moving to a different contracting environment where competencies, such as the ability to properly tailor the SE approach to “right size” the SE effort and balance cost and risk, might be more important.

Determining Roles and Competencies

Once an organization has characterized its context, the next step is to understand which specific SE roles are needed and how those roles will be allocated to teams and individuals. In order to be able to assess the performance of individuals, it is essential to explicitly state the roles and competencies required for that individual. The references from the section on SE Roles and Competencies provide guides to existing SE standards and SE competency models which can be leveraged.

Assessing Individual SE Competency

In order to enable improvement or fulfill of the required SE competencies identified by the organization, it must be possible to assess the existing level of competency for individuals. This assessment informs the interventions needed to further develop individual SE competency. Listed below are possible methods which may be used for assessing an individual's current competency level; an organization should choose the correct model based on their context, as identified previously.

Proficiency Levels

One approach to competency assessment is the use of [[Proficiency Level (glossary)|proficiency levels (glossary). Proficiency levels are frameworks to describe the level of skill or ability of an individual on a specific task. One popular proficiency framework is based on the “levels of cognition” in Bloom’s taxonomy (Bloom 1984), presented below in order from least complex to most complex.

- Remember – Recall or recognize terms, definitions, facts, ideas, materials, patterns, sequences, methods, principles, etc.

- Understand – Read and understand descriptions, communications, reports, tables, diagrams, directions, regulations, etc.

- Apply – Know when and how to use ideas, procedures, methods, formulas, principles, theories, etc.

- Analyze – Break down information into its constituent parts and recognize their relationship to one another and how they are organized; identify sublevel factors or salient data from a complex scenario.

- Evaluate – Make judgments about the value of proposed ideas, solutions, etc., by comparing the proposal to specific criteria or standards.

- Create – Put parts or elements together in such a way as to reveal a pattern or structure not clearly there before; identify which data or information from a complex set is appropriate to examine further or from which supported conclusions can be drawn.

Other examples of proficiency levels include the INCOSE (INCOSE 2010) competency model, with proficiency levels of: awareness, supervised practitioner, practitioner, and expert. The Academy of Program/Project & Engineering Leadership (APPEL) competency model includes the levels: participate, apply, manage, and guide, respectively (Menrad and Lawson 29 September-3 October, 2008). The U.S. National Aeronautics and Space Administration (NASA), as part of the APPEL (APPEL 2009), has also defined proficiency levels: (I) technical engineer/project team member; (II) subsystem lead/manager, (III) project manager/project systems engineer, and (IV) program manager/program systems engineer.

Situational Complexity

Competency levels can also be situationally based. The levels for the SPRDE competency model are based on the complexity of the situation to which the person can appropriately apply the competency to (DAU 2010):

- No exposure to or awareness of this competency.

- Awareness: Applies the competency in the simplest situations.

- Basic: Applies the competency in somewhat complex situations.

- Intermediate: Applies the competency in complex situations.

- Advanced: Applies the competency in considerably complex situations.

- Expert: Applies the competency in exceptionally complex situations.

Assessment Caution

When using application as a measure of competency, it is important to have a measure of goodness. Just because someone is applying a competency in an exceptionally complex situation, it does not mean they are doing well in this application. Likewise, just because a person is managing and guiding, it does not mean they are doing this well. In addition, an individual might be fully competent in an area, but not be given an opportunity to use that competency. Not all resources are utilized to their full potential.

Individual SE Competency vs. Performance

Even if an individual possesses exemplary systems engineering competency, the specific context in which the individual is embedded may preclude exemplary performance of that competency. For example, an individual with exemplary risk management competency may be embedded in a team which does not utilize that talent or in an organization with flawed procedural policies which do not fully utilize this ability. Developing individual competencies is not enough to ensure exemplary SE performance. The final execution and performance of systems engineering is a function of competency, capability, and capacity. The sections on Enabling Teams to Perform Systems Engineering and Enabling Businesses and Enterprises to Perform Systems Engineering address the context. For an individual, performance assessment can be an objective evaluation of the individual's performance if the systems engineering roles are clearly defined. The extensive literature on individual performance assessment can be referenced. However, it is most often a team of individuals tasked with accomplishing the systems engineering tasks on a project, and it is the team's performance which should be assessed.

References

Citations

Academy of Program/Project & Engineering Leadership (APPEL). 2009. NASA's systems engineering competencies. Washington, D.C.: U.S. National Aeronautics and Space Association.[1]

Bloom, B. S. 1984. Taxonomy of educational objectives. New York, NY: Longman.

DAU. SPRDE-SE/PSE competency assessment: Employee's user's guide, 5/24/2010 version. in Defense Acquisition University (DAU)/U.S. Department of Defense [database online]. Ft. Belvoir, VA, USA, 2010. [2]

INCOSE. 2010. Systems engineering competencies framework 2010-0205. San Diego, CA, USA: International Council on Systems Engineering (INCOSE), INCOSE-TP-2010-003.

Menrad, R., and H. Lawson. 29 September-3 October, 2008. Development of a NASA integrated technical workforce career development model entitled: Requisite occupation competencies and knowledge--the ROCK. Paper presented at 59th International Astronautical Congress (IAC), Glasgow, Scotland.

MITRE. 2007. Enterprise architecting for enterprise systems engineering. SEPO Collaborations. June 2007, SAE International (accessed August 2010).

Primary References

Academy of Program/Project & Engineering Leadership (APPEL). 2009. NASA's Systems Engineering Competencies. Washington, D.C.: U.S. National Aeronautics and Space Association.[3]

DAU. 2010. SPRDE-SE/PSE Competency Assessment: Employee's user's guide, 5/24/2010 version. in Defense Acquisition University (DAU)/U.S. Department of Defense[4]

INCOSE. 2010. Systems Engineering Competencies Framework 2010-0205. San Diego, CA, USA: International Council on Systems Engineering (INCOSE), INCOSE-TP-2010-003.

Additional References

None

Article Discussion

Signatures

--Asquires 15:11, 15 August 2011 (UTC)