Assessing Systems Engineering Performance of Business and Enterprises

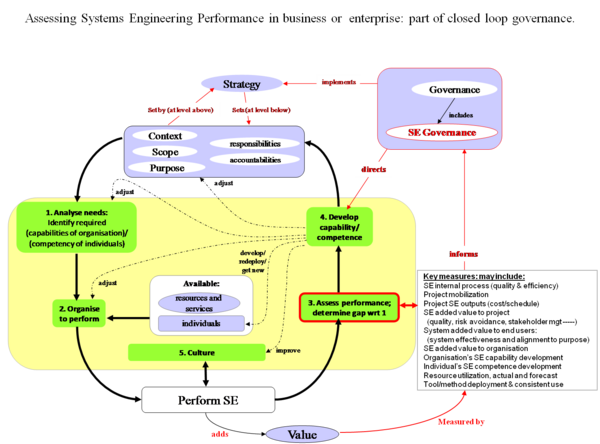

At the project level, systems engineering measurement focuses on indicators of project and system success that are relevant to the project and its stakeholders. At the business and enterprise level there are additional concerns. Systems Engineering Governance should ensure that the performance of systems engineering within the business or enterprise adds value to the organization, is aligned to the organization's purpose, and implements the relevant parts of the organization's strategy. The governance levers that can be used to improve performance include people (selection, training, culture, incentives), process, tools and infrastructure, and organization; therefore, the assessment of systems engineering performance in a business and enterprise should cover these dimensions and inform these choices.

Being able to aggregate high quality data about the performance of teams with respect to SE activities is certainly of benefit when trying to guide team activities. Having access to comparable data, however is often difficult, especially in organizations that are relatively autonomous, use different technologies and tools, build products in different domains, have different types of customers, etc. Even if there is limited ability to reliable collect and aggregate data across teams, having a policy that consciously decides how the business/enterprise will address data collection and analysis is valuable.

Relevant Measures

Typical relevant measures for assessing SE performance for a business/enterprise include:

- SE internal process

- Ability to mobilize the right resources at the right time for a new project or new project phase

- Project SE outputs

- SE added value to project

- System added value to end users

- SE added value to organization

- Organization's SE capability development

- Individuals' SE competence development

- Resource utilization, current and forecast

- Deployment and consistent usage of tools and methods

How the Measures Fit in the Governance Process and Improvement Cycle

Since collecting data and analyzing it takes effort - often significant effort - Measurement is best deployed when its purpose is clear and part of an overall strategy. Figure 1 shows one way in which appropriate measures inform business or enterprise level governance and drive an improvement cycle such as the Six Sigma DMAIC (Define, Measure, Analyze, Improve, Control) model.

Discussion of Performance Assessment Measures

Assessing SE Internal Process (Quality and Efficiency)

process is

The SEI CMMI Capability Maturity Model (2010) provides a structured way for businesses and enterprises to assess their SE processes. In the CMMI, a process area is a cluster of related practices in an area that, when implemented collectively, satisfies a set of goals considered important for making improvement in that area. There are CMMI models for acquisition, for development, and for services. (SEI: CMMI for Development V1.3 Page 11)

CMMI defines how to assess individual process areas against Capability Levels on a scale from 0 to 3, and overall organizational maturity on a scale from 1 to 5.

Assessing Ability to Mobilize for a New Project or New Project Phase

Successful and timely project initiation and execution depends on having the right people available at the right time. If key resources are deployed elsewhere, they cannot be applied to new projects at the early stages when these resources make the most difference. Queuing theory shows us that if a resource pool is running at or close to capacity, delays and queues are inevitable.

The ability to manage teams through their lifecycle - mobilize teams rapidly, establish and tailor an appropriate set of processes, metrics and systems engineering plans, support them to maintain a high level of performance, and capitalize acquired knowledge and redeploy the team members expeditiously as the team winds down - is an organizational capability that has substantial leverage on project and organizational efficiency and effectiveness.

Specialists and experts are used to a review progress, critiquing solutions, creating novel solutions, and solving critical problems. Specialists and experts are usually a scarce resource. Few businesses have the luxury of having enough experts with all the necessary skills and behaviors on tap to allocate to the teams when it is necessary. If the skills are core to the business' competitive position or governance approach, then it makes sense to manage them through a governance process that ensures their skills are applied to greatest effect across the business. Read more about Systems Engineering Governance.

Any business is likely to find itself performing a balancing act between having enough headroom to keep projects on schedule when things do not go as planned and utilize resources efficiently.

Project SE Outputs (Cost, Schedule, Quality)

Many of the systems engineering outputs in a project are produced early in the lifecycle to enable downstream activities. Hidden defects in the early phase systems engineering work products may not become fully apparent until the project hits problems in integration, V&V, or transition to operations. Intensive peer review and rigorous modeling are the normal ways of detecting and correcting defects in and lack of coherence between SE work products.

In a mature organization a good measure of SE quality is the number of defects that have to be corrected "out of phase", when in phases, the SE work product has been reviewed and approved for downstream use. This gives a good measure of process performance and the quality of SE outputs. Within a single project, the Work Product Approval, Review Action Closure, and Defect Error trends (SE Leading Indicators Guide 2010), contain information that allows residual defect densities to be estimated. (Davies and Hunter 2001)

Because of the leverage of front-end SE on overall project performance, it is important to focus on quality and timeliness of SE deliverables. Cost is important too, but if the work is done competently and on time, cost will tend to take care of itself; whereas if the SE work is done badly, downstream cost and schedule over-runs are inevitable (INCOSE UK Chapter Z-3 Guide 2009).

SE Added Value to Project

SE properly managed and performed should add value to the project in terms of quality, risk avoidance, improved coherence, better management of issues and dependencies, right-first-time integration and formal verification, stakeholder management, and effective scope management. Because quality and quantity of SE are not the only factors that influence these outcomes, and because the effect is a delayed one (good SE early in the project pays off in later phases) there has been a significant amount of research to establish evidence to underpin the asserted benefits of SE in projects.

A summary of the main results is provided in the value proposition for systems engineering section.

System Added Value to End Users

System added value to end users depends on system effectiveness and on alignment of the requirements and design to the end users' purpose and mission. System end users are often only involved indirectly in the procurement process. The research on the value proposition of systems engineering shows that good project outcomes do not necessarily correlate with good end user experience. Sometimes systems developers are discouraged from talking to end users because the acquirer is afraid of requirements creep. There is experience to the contrary, that end user involvement can result in more successful and simpler system solutions.

Two specific measures are indicative of end user satisfaction:

- The use of user-validated mission scenarios (both nominal and "rainy day" situations) to validate requirements, drive trade-offs and organize testing and acceptance;

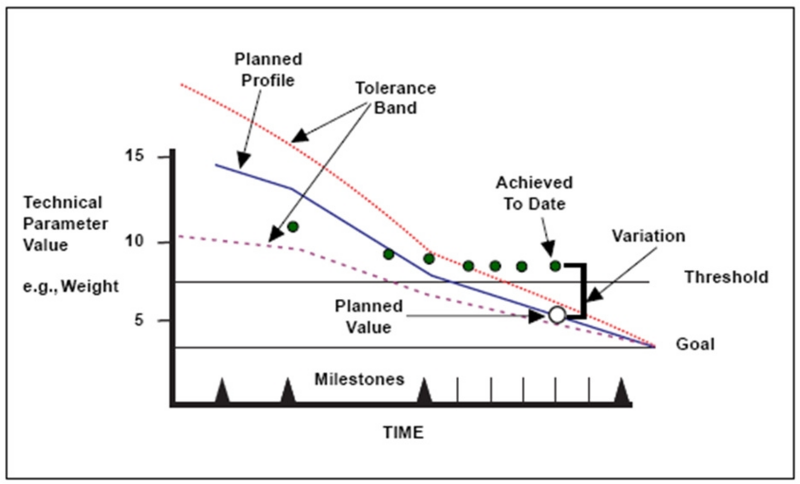

- The use of technical performance measure (tpm) to track critical performance and non-functional system attributes directly relevant to operational utility. The INCOSE SE Leading Indicators Guide (p 10 and pp 68 et seq) defines "technical measurement trends" as "Progress towards meeting the measure of effectiveness (moe) / Measure of Performance (MoP) (glossary / Key Performance Parameters (KPPs) and technical performance measure (tpm) ". A typical TPM progress plot is shown in Figure 2.

SE Added Value to Organization

SE at the organizational level aims to develop, deploy and enable effective systems engineering to add value to the organization’s business. The Systems Engineering function in the business or enterprise should understand the part it has to play in the bigger picture, and identify appropriate performance measures - derived from the business or enterprise goals, and coherent with those of other parts of the organization - so that it can optimize its contribution and justify the resources allocated to it.

Organization's SE Capability Development

The CMMI V1.3 CMMI Capability Maturity Model (2010) provides a means of assessing the process capability and maturity of businesses and enterprises. The higher CMMI levels are concerned with systemic integration of capabilities across the business or enterprise.

CMMI measures one important dimension of capability development, but CMMI maturity level is not a direct measure of business effectiveness unless the SE measures are properly integrated with business performance measures. These may include bid success rate, market share, position in value chain, development cycle time and cost, level of innovation and re-use, and the effectiveness with which SE capabilities are applied to the specific problem and solution space of interest to the business.

Individuals' SE Competence Development

Assessment of Individuals' SE competence development is described in the Assessing Individuals section of the SEBoK.

Resource Utilization, Current and Forecast

The INCOSE Systems Engineering Leading Indicators Guide (pp 58 et seq) proposes various metrics for staff ramp-up and use on a project. Across the business or enterprise, key indicators include the overall manpower trend across the projects, the stability of the forward load, levels of overtime, the resource headroom (if any), staff turnover, level of training, and the period of time for which key resources are committed.

Deployment and Consistent Usage of Tools and Methods

"To err is human - to really foul things up we need a computer!" (anonymous)

It is common practice to use a range of software tools in an effort to manage the complexity of system development and in-service management. These range from simple office suites to complex logical, virtual reality and physics-based modeling environments.

Deployment of SE tools requires careful consideration of purpose, business objectives, business effectiveness, training, aptitude, method, style, business effectiveness, infrastructure, support, integration of the tool with the existing or revised SE process, and approaches to ensure consistency, longevity and appropriate configuration management of information. Systems may be in service for upwards of 50 years; storage media and file formats as little as 10-15 years old are unreadable on most modern computers. It is desirable for many users to be able to work with a single common model; it can be that two engineers sitting next to each other using the same tool use sufficiently different modeling styles that they cannot work on or re-use each others' models.

License usage over time and across sites and projects is a key indicator of extent and efficiency of tool deployment. More difficult to assess is the consistency of usage. The INCOSE Systems Engineering Leading Indicators Guide p 73 et seq recommends metrics on "facilities and equipment availability".

Practical Considerations (Pitfalls, Good Practice, etc.)

Assessment of SE performance at organizational level is complex and needs to consider soft as well as hard issues. Stakeholder concerns and satisfaction criteria may not be obvious or explicit. Clear and explicit reciprocal expectations and alignment of purpose, values, goals and incentives help to achieve synergy across the organization and avoid misunderstanding.

"What gets measured gets done". Metrics drive behaviour, so it is important to ensure that the metrics used to manage the organization reflect its purpose and values, and do not drive perverse behaviours. SE Measurement Primer(INCOSE 2010).

Process and measurement cost money and time, so it is important to get the "right" amount of process definition, and the right balance of investment between process, measurement, people and skills. Any process flexible enough to allow innovation will also be flexible enough to allow mistakes. If process is seen as excessively restrictive or prescriptive, in an effort to prevent mistakes, it may inhibit innovation and demotivate the innovators, leading to excessively risk averse behaviour.

It is possible for a CMMI effort to become an end in itself rather than a means to improve business performance (Sheard 2003). To guard against this it is advisable to remain clearly focused on purpose (Blockley and Godfrey 2000), and on added value - (Oppenheim et al. 2010); and to ensure clear and sustained top management commitment to driving the CMMI approach to achieve the required business benefits. CMMI is as much about establishing a performance culture as about process per se.

"The Systems Engineering process is an essential complement to, and is not a substitute for, individual skill, creativity, intuition, judgment etc. Innovative people need to understand the process and how to make it work for them, and neither ignore it nor be slaves to it. Systems Engineering measurement shows where invention and creativity need to be applied. SE process creates a framework to leverage creativity and innovation to deliver results that surpass the capability of the creative individuals – results that are the emergent properties of process, organisation, and leadership." (Sillitto 2011)

References

Citations

- Blockley, D. and P. Godfrey. 2000. Doing It Differently – Systems For Rethinking Construction. London, UK: Thomas Telford Ltd.

- Davies, P. & N. Hunter. 2001. System Test Metrics on a Development-Intensive Project. Thales: Proceedings from the 2001 INCOSE International Symposium.

- INCOSE. Woodcock,H. 2009. Why Invest in Systems Engineering. How Systems Engineering can save your business money Somerset, England: INCOSE UK Chapter. Z-3 Guide, Issue 3.0, (March 2009).

- Oppenheim et al. 2010. Lean enablers for Systems Engineering. INCOSE Lean SE WG. Hoboken, NJ, USA: Wiley Periodicals, Inc.(2010) Lean Enablers for Systems Engineering (Accessed September 2, 2011)

- SEI. 2010. Capability Maturity Model Integrated (CMMI) for Development, version 1.3. Pittsburgh, PA, USA: Software Engineering Institute (SEI)/Carnegie Mellon University (CMU).

- Sheard, S, 2003. "The Lifecycle of a Silver Bullet". Crosstalk: The Journal of Defense Software Engineering. July 2003

- Sillitto, H. 2011. Panel on "People or Process, Which is More Important". Presented at the INCOSE International Symposium, Denver,CO in June 2011.

- Systems Engineering Leading Indicators Guide, Version 2.0, January 29, 2010, Editors: Garry Roedler, Lockheed Martin Corporation, Donna H. Rhodes, Massachusetts Institute of Technology, Howard Schimmoller, Lockheed Martin Corporation, Cheryl Jones, US Army. Published jointly by LAI, SEARI, INCOSE, PSM

Primary References

- Frenz, Paul et al. 2010. Systems Engineering Measurement Primer: A Basic Introduction to Measurement Concepts and Use for Systems Engineering. version 2.0. San Diego, CA, USA: International Council on System Engineering (INCOSE). INCOSE‐TP‐2010‐005‐02. Available at: http://www.incose.org/ProductsPubs/pdf/INCOSE_SysEngMeasurementPrimer_2010-1205.pdf.

- Roedler,G., D.H. Rhodes, H. Schimmoler, and C. Jones (eds). 2010. Systems Engineering Leading Indicators Guide, version 2.0. Published jointly by LAI, SEARI, INCOSE, and PSM.

Additional References

- Jelinski, Z. and Moranda, P.B. 1972. "Software Reliability Research". Statistical Computer Performance Evaluation (W. Freiberger. Ed. pp. 465-484. New York, New York, USA: Academic Press.

- Alhazmi O. H. and Malaiya Y. K. 2005. Modeling the Vulnerability Discovery Process. issre, pp.129-138. 16th IEEE International Symposium on Software Reliability Engineering (ISSRE'05).

- Alhazmi, O.H. and Malaiya, Y.K. 2006. Prediction capabilities of vulnerability discovery models. Proceedings from the Reliability and Maintainability Symposium from January 23-26, 2006. RAMS '06. Annual , vol., no., pp.86-91. doi: 10.1109/RAMS.2006.1677355. Newport Beach, CA, USA: IEEE. Available at http://ieeexplore.ieee.org/stamp/stamp.jsp?tp=&arnumber=1677355&isnumber=34933 (Accessed September 7, 2011)

Article Discussion

Signatures

--Smenck2 14:54, 8 September 2011 (UTC)See discussion points.