Decision Management

The purpose of decision management is to analyze possible decisions using a formal evaluation process that evaluates identified alternatives against established decision criteria. It involves establishing guidelines to determine which issues should be subjected to a formal evaluation process, and then applying formal evaluation processes to these issues.

Making decisions is one of the most important processes practiced by systems engineers, project managers, and all team members. Sound decisions are based on good judgment and experience. There are concepts, methods, processes, and tools that can assist in the process of decision making, especially in making comparisons of decision alternatives. These tools can also assist in building team consensus in selecting and supporting the decision made and in defending it to others.

The technical discipline of decision analysis is identifying the best option among a set of alternatives under uncertainty. Many analysis methods are used in the multidimensional tradeoffs of systems engineering under varying degrees of uncertainty. These start from non-probabilistic decision rules that ignore the likelihood of chance outcomes, move to expected value rules, and end in more general utility approaches. Decision judgment and analysis methods are described after the overview of organizational processes.

Process Overview

The best practices of decision management for evaluating alternatives are described in the following sections based on(2007) grouped by specific practices.

- Establish Guidelines for Decision Analysis. Guidelines should be established to determine which issues are subject to a formal evaluation process, as not every decision is significant enough to warrant formality. Whether a decision is significant or not is dependent on the project and circumstances, and is determined by the established guidelines.

- Establish Evaluation Criteria. Criteria for evaluating alternatives and the relative ranking of these criteria are established. The evaluation criteria provide the basis for evaluating alternative solutions. The criteria are ranked so that the highest ranked criteria exert the most influence. There are many contexts in which a formal evaluation process can be used by systems engineering (SE), so the criteria may have already been defined as part of another process.

- Identify Alternative Solutions. Identify alternative solutions to address program issues. A wider range of alternatives can surface by soliciting more stakeholders with diverse skills and backgrounds to identify and address assumptions, constraints, and biases. Brainstorming sessions may stimulate innovative alternatives through rapid interaction and feedback. Sufficient candidate solutions may not be furnished for analysis. As the analysis proceeds, other alternatives should be added to the list of potential candidates. The generation and consideration of multiple alternatives early in a decision process increases the likelihood that an acceptable decision will be made and that consequences of the decision will be understood.

- Select Evaluation Methods. Methods for evaluating alternative solutions against established criteria can range from simulations to the use of probabilistic models and decision theory. These methods need to be carefully selected. The level of detail of a method should be commensurate with cost, schedule, performance, and associated risk. Typical analysis evaluation methods include the following:

- Modeling and simulation.

- Analysis studies on business opportunities, engineering, manufacturing, cost, etc.

- Surveys and user reviews.

- Extrapolations based on field experience and prototypes.

- Testing.

- Judgment provided by an expert or group of experts (e.g., Delphi Method).

- Evaluate Alternatives. Evaluate alternative solutions using the established criteria and methods. Evaluating alternative solutions involves analysis, discussion, and review. Iterative cycles of analysis are sometimes necessary. Supporting analyses, experimentation, prototyping, piloting, or simulations may be needed to substantiate scoring and conclusions.

- Select Solutions. Select solutions from the alternatives based on the evaluation criteria. Selecting solutions involves weighing the results from the evaluation of alternatives and the corresponding risks.

Decision Judgment Methods

Common alternative judgment methods range from indifference to a probability based judgement. Methods that the practitioner should be aware of include:

- Emotion based judgment. Once a decision is made public, the decision-makers will vigorously defend their choice, even in the face of contrary evidence, because it is easy to become emotionally tied to the decision. Another phenomenon is that people often need “permission” to support an action or idea, as explained by Cialdini (2006), and this inherent human trait also suggests why teams often resist new ideas.

- Intuition based judgment. Intuition plays a key role in leading development teams to creative solutions. Gladwell (2005) makes the argument that we intuitively see the powerful benefits or fatal flaws inherent in a newly proposed solution. Intuition can be an excellent guide when based on relevant past experience but it may blind you to as-yet undiscovered concepts. Ideas generated based on intuition should be considered seriously, but should be treated as an output of a brainstorming session, and evaluated using one of the next three approaches. Also, see Skunk Works (Rich and Janos 1996) for more on intuitive decisions.

- Expert based judgment. For certain problems, especially ones involving technical expertise outside your field, calling in experts is a cost effective approach. The decision-making challenge is to establish perceptive criteria for selecting the right experts.

- Fact based judgment. This is the most common situation and discussed in more detail below.

- Probability based judgment. SE methods are used to deal with uncertainty, and this topic is also elaborated below.

Fact Based Decision Making

Informed decision-making requires a clear statement of objectives, a clear understanding of the value of the outcome, a gathering of relevant information, an appropriate assessment of alternatives, and a logical process to make a selection.

Regardless of the method, the starting point is to identify an appropriate (usually small) team to frame and challenge the decision statement. The decision statement should be concise, but the decision maker and the team should iterate until they have considered positive and negative consequences of the way they have expressed their objective.

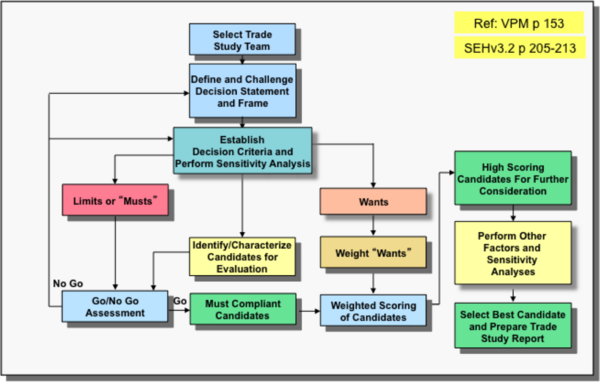

Once the decision maker and team accept the decision statement, the next step is to define the decision criteria. As shown in the next figure the criteria fall into two categories: “Musts” and “Wants.” Any candidate solution that does not satisfy a “Must” should be rejected, no matter how attractive all other aspects of the candidate are.

If a candidate solution you feel is promising fails the “Must” requirements, there is nothing wrong in challenging the requirements, as long as this is done with open awareness to avoid bias. This is a judgmental process and the resulting matrix is a decision support guide, not a mandatory theoretical constraint. A sample flowchart to assist in fact based judgment from Visualizing Project Management is below (Forsberg, Mooz, and Cotterman 2005, 154-155).

The next step is to define the desirable characteristics of the solution, and develop a relative weighting. If no weighting is used it implies all criteria are of equal importance. One fatal flaw is if the team creates too many criteria (15 or 20 or more), since this tends to obscure important differences in the candidate solutions.

There are a number of approaches, starting with Pugh’s Method in which each candidate solution is compared to a reference standard, and rated as equal, better, or inferior for each of the criteria. A more perceptive approach is the Kepner Tragoe decision matrix described in (Forsberg, Mooz, Cotterman. 2005. Pg 154-155).

When the relative comparison is completed, scores within five percent of each other are essentially equal. An opportunity and risk assessment should be performed on the best candidates, and also a sensitivity analysis on the scores and weights to ensure that the robustness (or fragility) of decision is known.

In the preceding example all criteria are compared to the highest-value criterion. Another approach is to create a full matrix of pair-wise comparisons of all criteria against each other, and from that, using the Analytical Hierarchy Process (AHP), the relative importance is calculated. The process is still judgmental, and the results are no more accurate, but several computerized decision support tools have made effective use of this process (Saaty, 2008).

Probability Based Decision Analysis

Probability based decisions are made when there is uncertainty. Decision management techniques and tools for decisions based on uncertainty include probability theory, utility functions, decision tree analysis, modeling, and simulation. A classic mathematically oriented reference in the area of decision analysis is (Raiffa 1997) for understanding decision trees and probability analysis. Another classic introduction is (Schlaiffer 1969) with more of an applied focus. The aspect of modeling and simulation is covered in the popular textbook (Law 2007), which also has good coverage of Monte Carlo analysis. Some of these more commonly used and fundamental methods are overviewed below.

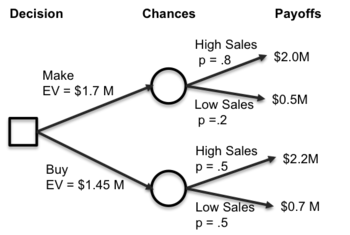

Decision trees and influence diagrams are visual analytical decision support tools where the expected values (or expected utility) of competing alternatives are calculated. A decision tree uses a tree-like graph or model of decisions and their possible consequences, including chance event outcomes, resource costs, and utility. Influence diagrams are used for decision models as alternate, more compact graphical representations of decision trees.

The figure below demonstrates a simplified make vs. buy decision analysis tree and the associated calculations. Suppose making a product costs $200K more than buying an alternative off the shelf, reflected as a difference in the net payoffs in the figure. The custom development is also expected to be a better product with a corresponding larger probability of high sales at 80% vs. 50% for the bought alternative. With these assumptions, the monetary expected value of the make alternative is .8*2.0M + .2*0.5M = 1.7M and the buy alternative is .5*2.2M + .5*0.7M = 1.45M.

Influence diagrams focus attention on the issues and relationships between events. They are generalizations of Bayesian networks whereby maximum expected utility criteria can be modeled. A good reference is (Detwarasiti and Shachter 2005, 207-228) for using influence diagrams in team decision analysis.

Expected utility is more general than expected value. Utility is a measure of relative satisfaction that takes into account the decision maker's preference function, which may be nonlinear. Expected utility theory deals with the analysis of choices with multidimensional outcomes. The analyst should determine the decision-maker's utility for money and select the alternative course of action that yields the highest expected utility, rather than the highest expected monetary value. A classic reference on applying multiple objective methods, utility functions, and allied techniques is (Kenney and Raiffa 1976). References with applied examples of decision tree analysis and utility functions include (Samson 1988) and (Skinner 1999).

Systems engineers often have to consider many different criteria when making choices about system tradeoffs. Taken individually, these criteria could lead to very different choices. A weighted objectives analysis is a rational basis for incorporating the multiple criteria into the decision.

Each criterion is weighted a certain amount depending on its importance relative to the others. The table below shows an example deciding between two alternatives using their criteria weights, ratings, weighted ratings, and weighted totals to decide between the alternatives.

| Alternative A | Alternative B | ||||

|---|---|---|---|---|---|

| Criteria | Weight | Rating | Weight * Rating | Rating | Weight * Rating |

| Better | 0.5 | 4 | 2.0 | 10 | 5.0 |

| Faster | 0.3 | 8 | 2.4 | 5 | 21.5 |

| Cheaper | 0.2 | 5 | 1.0 | 3 | 0.6 |

| Total Weighted Score | 5.4 | 7.1 | |||

There are numerous other methods used in decision analysis. One of the simplest is sensitivity analysis, which looks at the relationships between the outcomes and their probabilities to find how sensitive a decision point is to changes in inputs. Value of information methods expend effort on data analysis and modeling to improve the optimum expected value. Multi Attribute Utility Analysis (MAUA) is a method that develops equivalencies between dissimilar units of measure.

(Blanchard 2004b) shows a variety of these decision analysis methods in many technical decision scenarios. A comprehensive reference demonstrating decision analysis methods for software-intensive systems is (Boehm 1981, 32-41). It is a major treatment of multiple goal decision analysis, dealing with uncertainties, risks, and the value of information.

Facets of a decision situation which cannot be explained by a quantitative model should be reserved for intuition and judgment applied by the decision maker. Sometimes outside parties are also called upon. One method to canvas experts is the Delphi Technique procedure for organizing and sharing expert forecasts about the future outcomes or parameter values. The Delphi Technique is a method of group decision-making and forecasting that involves successively collating the judgments of experts. A variant called the Wideband Delphi technique is described in (Boehm 1981, 32-41) for improving upon the standard Delphi with more rigorous iterations of statistical analysis and feedback forms.

General tools, such as spreadsheets and simulation packages, can be used with these methods. There are also tools targeted specifically for aspects of decision analysis such as decision trees, evaluation of probabilities, Bayesian influence networks, and others. The INCOSE website for the tools database (INCOSE 2010, 1)) has an extensive list of analysis tools.

Linkages to Other Systems Engineering Management Topics

Decision management is used in many other process areas due to numerous contexts for a formal evaluation process, both technical and management. It is closely coupled with other management areas. Risk Management in particular uses decision analysis methods for risk evaluation and mitigation decisions, and a formal evaluation process to address medium or high risks. The Measurement process describes how to derive quantitative indicators as input to decisions. Project Assessment and Control uses decision results for controlling. Refer to the Planning process area for more information about incorporating decision results into project plans.

Practical Considerations

Key pitfalls and good practices related to decision analysis are described below.

Pitfalls

Some of the key pitfalls are below.

| Name | Description |

|---|---|

| False confidence |

|

| No external validation |

|

| Errors and false assumptions |

|

| Impractical application |

|

Good Practices

Some good practices are below.

| Name | Description |

|---|---|

| Progressive Decision Modeling |

|

| Necessary Measurements |

|

| Define Selection Criteria |

|

Additional good practices can be found in (ISO/IEC/IEEE 2009, Clause 6.3) and (INCOSE 2010, Section 5.3.1.5). (Parnell, Driscoll, and Henderson 2008) provide a thorough overview.

References

Citations

Blanchard, B. S. 2004. Systems Engineering Management. 3rd ed. New York, NY,USA: John Wiley & Sons.

Boehm, B. 1981. "Software risk management: Principles and practices." IEEE Software 8 (1) (January 1991): 32-41.

Cialdini, R.B. 2006. Influence: The Psychology of Persuasion. New York, NY, USA: Collins Business Essentials.

Detwarasiti, A., and R. D. Shachter. 2005. Influence diagrams for team decision analysis. Decision Analysis 2 (4): 207-28.

Gladwell, Malcolm. 2005. Blink: the Power of Thinking without Thinking. Boston, MA, USA: Little, Brown & Co.

Kenney, R. L., and H. Raiffa. 1976. Decision with multiple objectives: Preferences and value- trade-offs. New York, NY: John Wiley & Sons.

INCOSE. 2011. INCOSE Systems Engineering Handbook: A Guide for System Life Cycle Processes and Activities, version 3.2.1. San Diego, CA, USA: International Council on Systems Engineering (INCOSE), INCOSE-TP-2003-002-03.2.1.

Law, A. 2007. Simulation Modeling and Analysis. 4th ed. New York, NY, USA: McGraw Hill.

Rich, B. and L. Janos. 1996. Skunk Works. Boston, MA, USA: Little, Brown & Company.

Saaty, Thomas L. 2008. Decision Making for Leaders: The Analytic Hierarchy Process for Decisions in a Complex World. Pittsburgh, PA, USA:: RWS Publications. ISBN 0-9620317-8-X.

Raiffa, H. 1997. Decision Analysis: Introductory Lectures on Choices under Uncertainty. New York, NY, USA: McGraw-Hill.

Samson, D. 1988. Managerial Decision Analysis. New York, NY, USA: Richard D. Irwin, Inc.

Schlaiffer, R. 1969. Analysis of Decisions under Uncertainty. New York, NY, USA: McGraw-Hill book Company.

Skinner, D. 1999. Introduction to decision analysis. 2nd ed. Sugar Land, TX, USA: Probabilistic Publishing.

Wikipedia. 2011. Decision making software.

Primary References

Forsberg, K., H. Mooz, H. Cotterman. 2005. Visualizing Project Management, 3rd Ed. Hoboken, NJ, USA: John Wiley and Sons. pg 154-155.

Law, A. 2007. Simulation Modeling and Analysis. 4th ed. New York, NY, USA: McGraw Hill.

Raiffa, H. 1997. Decision Analysis: Introductory Lectures on Choices under Uncertainty. New York, NY, USA: McGraw-Hill.

Saaty, Thomas L. 2008. Decision Making for Leaders: The Analytic Hierarchy Process for Decisions in a Complex World. Pittsburgh, PA, USA: RWS Publications. ISBN 0-9620317-8-X.

Samson, D. 1988. Managerial Decision Analysis. New York, NY, USA: Richard D. Irwin, Inc.

Schlaiffer, R. 1969. Analysis of Decisions under Uncertainty. New York, NY, USA: McGraw-Hill.

Additional References

Blanchard, B. S. 2004. Systems engineering management. 3rd ed. New York, NY, USA: John Wiley & Sons.

Boehm, B. 1981. "Software risk management: Principles and practices." IEEE Software 8 (1) (January 1991): 32-41.

Detwarasiti, A., and R. D. Shachter. 2005. Influence diagrams for team decision analysis. Decision Analysis 2 (4): 207-28.

INCOSE. 2011. INCOSE Systems Engineering Handbook: A Guide for System Life Cycle Processes and Activities, version 3.2.1. San Diego, CA, USA: International Council on Systems Engineering (INCOSE), INCOSE-TP-2003-002-03.2.1.

Kenney, R. L., and H. Raiffa. 1976. Decision with multiple objectives: Preferences and value- trade-offs. New York, NY, USA: John Wiley & Sons.

Law, A. 2007. Simulation Modeling and Analysis. 4th ed. New York, NY, USA: McGraw Hill.

Parnell, G. S., P. J. Driscoll, and D. L. Henderson. 2010. Decision Making in Systems Engineering and Management. New York, NY, USA: John Wiley & Sons.

Rich, Ben, Leo Janos. 1996. Skunk Works. Boston, MA, USA: Little, Brown & Company

Skinner, D. 1999. Introduction to decision analysis. 2nd ed. Sugar Land, TX, USA: Probabilistic Publishing.

Article Discussion

Signatures

--Groedler 01:35, 30 August 2011 (UTC)

--Dholwell 18:08, 5 September 2011 (UTC) core edit