System Analysis

Introduction, Definition and Purpose of System Analysis

System Analysis allows the developers of systems to carry out (in the most objective way possible) assessments of engineering data in order to select the most efficient system architecture , and also to provide a set of consistent and accurate engineering data including System Requirements.

During engineering, assessments should be performed every time technical choices or decisions have to be made or justified, not only to compare different design architectures, but taking into account the System Requirements. System Analysis provides a rigorous approach to technical decision-making. It is used to perform trade-off studies, including a set of analysis such as cost analysis, technical risks analysis, and effectiveness analysis. “Assess and select” is one of the major tasks of the system engineer.

Principles about System Analysis

One of the major development issues is to ensure that the global set of engineering data of a System of Interest (mainly Stakeholder Requirements, System Requirements, Design Properties) the engineers have created is consistent and that the values of data are relevant. Trade-off studies are at the centre of System Analysis, focusing on these aspects and providing means and techniques:

- to define assessment criteria based on System Requirements;

- to assess design properties of each candidate solution in comparison to these criteria;

- to score globally the candidate solutions, and to justify the scores.

The number and importance of Assessment Criteria to be assessed depend on the considered type of system and on its context of operational use. The various assessments use theoretical models, representation models, mock-ups, simulations, analytical models, etc.

Trade-off studies

In the context of the definition of a system, a trade-off study consists of comparing the characteristics of each candidate solution to determine the solution that best globally addresses the Assessment Criteria. The various characteristics analyzed are gathered in Costs Analysis, Technical Risks Analysis, and Effectiveness Analysis (NASA. 2007) page 1-360. Each class of analysis is the subject of the following topics:

- assessment criteria are used to classify the various candidate solutions between themselves. They are absolute or relative. Example: maximum cost per unit produced is cc$; cost reduction shall be x%; effectiveness improvement is y%, risk mitigation is z%.

- Boundaries identify and limit the characteristics or criteria to be taken into account in the analysis. Examples: kind of costs to be taken into account; acceptable technical risks; type and level of effectiveness.

- Scales are used to quantify the characteristics or properties or criteria and to make comparisons. Their definition requires knowing the highest and lowest limits as well as the type of evolution of the characteristic (linear, logarithmic, etc.).

- An assessment score is assigned to a characteristic or criterion for each candidate solution. The goal of the trade-off study is to succeed in quantifying the three variables (and their decomposition in sub variables) of cost, risk, and effectiveness for each candidate solution. This operation is generally complex and requires the use of models.

- The optimization of the characteristics or properties improves the scoring of interesting solutions.

A decision-making process is not an accurate science and trade-off studies have limits. The following concerns shall be taken into account:

- Subjective variables: for example, the component has to be beautiful. What is a beautiful component?

- Uncertain data: for example, inflation has to be taken into account to estimate the cost of maintenance during the complete life cycle. What will be inflation for the next 5 years?

- Sensitivity analysis: a global assessment score associated to every candidate solution is not absolute; it is recommended to get a robust selection by performing sensitivity analysis that consists to stimulate the decision model with small variations of assessment criteria values (weights). The selection is robust if the variations do not change the order of scores.

A relevant trade-off study specifies the assumptions, variables, and confidence intervals of the results. Knowledge management and a former experience that result in the building of significant databases and models that are able to demonstrate relevance and efficiency are a considerable advantage.

Costs Analysis

The reference index for cost analysis is the "global life cycle cost". This baseline can be adapted according to the project and the system. The global life cycle cost includes costs for (examples):

- Development: engineering, development tools (equipments and software), project management, test-benches, mock-ups and prototypes, documentation, training

- Product manufacturing or service realization: raw materials and supplying, spare parts and stock assets, necessary resources to operation (water, electricity power, etc.), risks and nuisances, evacuation, treatment and storage of waste or rejections produced, expenses of structure (taxes, management, purchase, documentation, quality, cleaning, regulation controls, etc.), packing and storage, documentation required

- Sales and after-sales: expenses of structure (subsidiaries, stores, workshops, distribution, information acquisition, etc.), complaints and guarantees

- Customer utilization: taxes, installation (customer), resources necessary to the operation of the product (water, fuel, lubricants, etc.), financial risks and nuisances

- Supply chain: transportation and delivery

- Maintenance: field services, preventive maintenance, regulation controls, spare parts and stocks, cost of the guarantee

- Disposal: collection, dismantling, transportation, treatment, waste recycling

Technical Risks Analysis

Every risk analysis concerning every domain is based on three things:

- Analysis of potential threats or undesired events and their probability of occurrence.

- Analysis of the consequences of these threats or undesired events and their classification on a scale of gravity.

- The installation of protections or preventions to reduce the probabilities of threats and/or the levels of harmful effect to acceptable values.

The technical risks appear when the system cannot satisfy the System Requirements any longer. The causes reside in the solution itself and/or in the requirements. They are expressed in the form of insufficient effectiveness and can have multiple causes; for example, incorrect assessment of the technological capabilities, failure of parts, breakdowns, breakage, obsolescence of equipment, parts, or software, weakness from the supplier (non-compliant parts, delay for supply, etc.), human factors (insufficient training, wrong tunings, error handling, unsuited procedures, malice), etc. Because some risks come from context modifications, the project or the company cannot react: natural events (storm, flood, etc.), decisions or political events, regulation and standardization evolutions, change of policy or strategy.

Note: Technical risks have not to be confused with project risks even if the method to manage them is the same. Technical risks address the system itself, not the project for its development. Of course technical risks may react onto project risks. Technical risk can be managed on the same way that project risks all along the development till being satisfactorily mitigated.

Effectiveness Analysis

Effectiveness studies use the different types of requirements as a starting point. The effectiveness of the system concerns several essential characteristics that are generally gathered in the following list of analyses, including but not limited to: performance, usability, dependability, manufacturing, maintenance or support, environment, etc. These analyses highlight candidate solutions under various aspects. It is essential to establish a classification in order to limit the number of analysis to the really significant aspects. The main difficulties of the effectiveness analysis are to sort and select the right set of effectiveness aspects; for example, if the product is made for a single use, the maintainability and the capability of evolution will not be relevant criteria.

Process Approach - System Analysis

Purpose and principles of the approach

The system analysis process is used to: (1) provide a rigorous basis for technical decision making, resolution of requirement conflicts, and assessment of alternative physical solutions; (2) determine progress in satisfying System Requirements and derived requirements; (3) support risk management; and (4) ensure that decisions are made only after evaluating the cost, schedule, performance, and risk effects on the engineering or reengineering of the system. (ANSI/EIA. 1998)

This process is named "Decision Analysis Process" in (NASA. 2007) page 1-360: The Decision Analysis Process is used to help evaluate technical issues, alternatives, and their uncertainties to support decision-making.

The system analysis supports other system definition processes:

- Stakeholder Requirements definition and System Requirements definition processes use system analysis to solve issues relating to conflicts among the set of requirements, in particular those related to costs, technical risks, and effectiveness (performances, operational conditions, and constraints). System Requirements subject to high risks or which would require different architectures are discussed.

- The Architectural Design process uses it to assess characteristics or Design Properties of candidate Functional and Physical Architectures, providing arguments for selecting the most efficient one in terms of costs, technical risks, and effectiveness (example: performances, dependability, human factors, etc.).

Like any system definition process, the System Analysis Process is iterative. Each operation is carried out several times; each step improves the precision of analysis.

Activities of the Process

Major activities and tasks performed during this process include:

- Planning the trade-off studies.

- Determine the number of candidate solutions to analyze, the methods and procedures to be used, the expected results (objects to be selected: Functional Architecture/Scenario, Physical Architecture, System Element, etc.), the justification items.

- Schedule the analyses according to the availability of models, engineering data (System Requirements, Design Properties), skilled personnel, and procedures.

- Define the selection criteria model.

- Select the Assessment Criteria from non-functional requirements (performances, operational conditions, constraints, etc.).

- Sort and order the Assessment Criteria.

- Establish a scale of comparison for each Assessment Criterion and weigh every Assessment Criterion according to its level of relative importance with the others.

- Identify candidate solutions and related models, and data.

- Assess candidate solutions using previously defined methods or procedures.

- Carry out costs analysis, technical risks analysis, and effectiveness analysis placing every candidate solution on every Assessment Criterion comparison scale.

- Score every candidate solution as an Assessment Score.

- Provide results to the calling process: Assessment Criteria, comparison scales, solutions’ scores, Assessment Selection (glossary), and eventually recommendations and related arguments.

Artifacts and Ontology Elements

This process may create several artifacts such as:

- Selection criteria model (list, scales, weighing)

- Costs, risks, effectiveness analysis reports

- Justification reports

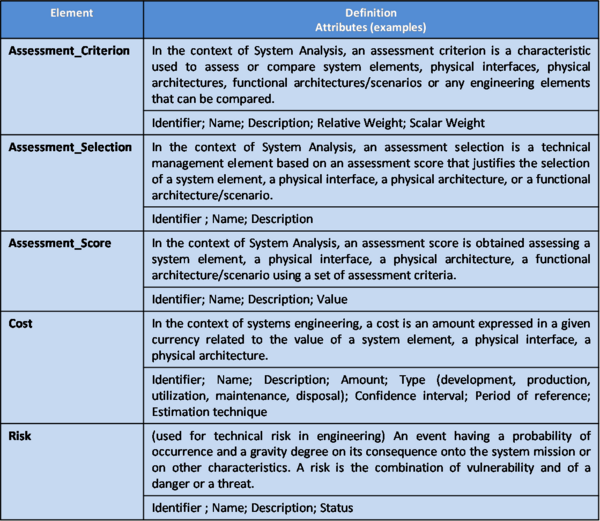

This process handles the ontology elements of Table 1 within System Analysis.

Table 1. Main ontology elements as handled within System Analysis

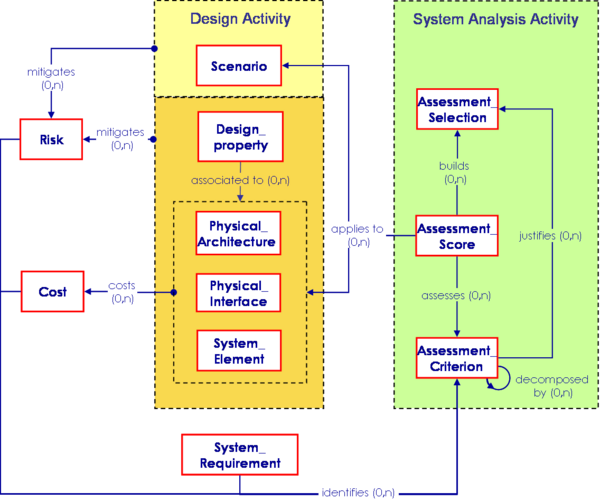

The main relationships between ontology elements of System Analysis are presented in Figure 1.

Figure 1. System Analysis elements relationships with other engineering elements (Faisandier, 2011)

Checking and correctness of System Analysis

TO BE WRITTEN

ADD ACTION RELATED TO COMMENT 408

Methods and Modeling Techniques

- General usage of models: Various types of models can be used in the context of System Analysis:

- Physical models are scale models allowing to simulate physical phenomena; they are specific to each discipline; associated tools are for example mocks-up, vibration tables, test benches, prototypes, decompression chamber, wind tunnels, etc.

- Representation models are mainly used to simulate the behavior of a system; for example Enhanced Functional Flow Block Diagrams (EFFBD), Statecharts, State machine diagram (SysML), etc.

- Analytical models are mainly used to establish values of estimates, and we can consider the deterministic models and probabilistic models (also known as stochastic models). Analytical models use equations or diagrams to approach the real operation of the system. They can be from simplest (addition) to most complicated (probabilistic distribution with several variables).

- Use right models depending of the project progress:

- At the beginning of the project, first studies use simple tools, allowing rough approximations which have the advantage of not requiring too much time and effort; these approximations are often sufficient to eliminate unrealistic or outgoing candidate solutions.

- Progressively with the progress of the project it is necessary to improve precision of data to compare the candidate solutions still competing. The work is more complicated if the level of innovation is high.

- A system engineer alone cannot model a complex system: he has to be supported by skilled people from different disciplines involved.

- Deterministic models:

- Models containing statistics are included in this category. The principle consists in establishing a model based on a significant number of data and results from former projects; they can apply only to system elements / components whose technology already exists.

- Models by analogy also use former projects: the system element being studied is compared to an already existing system element with known characteristics (cost, reliability, etc.). Then these characteristics are adjusted with specialists expertise.

- Learning curves allow foreseeing the evolution of a characteristic or a technology. Example of evolution: "each time the number of produced units is multiplied by two, the cost of this unit is reduced with a certain percentage, generally constant".

- Probabilistic models (also called stochastic models): The theory of probability allows classifying the possible candidate solutions compared to consequences from a set of events as criteria. These models are applicable if the number of criteria is limited and the combination of the possible events is simple. Take care that the sum of probabilities of all events is equal to 1 for each node.

- Specialist expertise: When the values of Assessment Criteria cannot be given in an objective or precise way, or because the subjective aspect is dominating, we can ask to specialists for expertise. The estimates proceeds in four steps:

- Select interviewees to collect the opinion of qualified people for the considered field.

- Draft a questionnaire; a precise questionnaire allows an easy analysis, but a questionnaire too closed risks to neglect significant points.

- Interview a limited number of specialists with the questionnaire and having an in-depth discussion to get precise opinions.

- Analyze the data with several different people comparing their impressions until agreement on a classification of Assessment Criteria and/or candidate solutions.

- Multi-criteria decision models: When the number of criteria is greater than 10, it is recommended to establish a multi-criteria decision model. It is obtained through following actions:

- Organize the criteria as a hierarchy (or a decomposition tree).

- Associate each criterion of each branch of the tree with a relative weight compare to each other of the same level.

- Calculate a scalar weight for each leaf criterion of each branch multiplying all the weights of the branch.

- Score every candidate solution on the leaf criteria; sum the scores to get a global score for each candidate solution; compare the scores.

- Using a computerized tool allows to perform sensitivity analysis to get a robust choice.

Application to Product systems, Service systems, Enterprise systems

TO BE WRITTEN

Practical Considerations about System Analysis

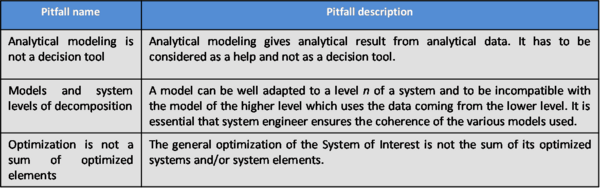

Major pitfalls encountered with System Analysis are presented in Table 2.

Table 2 – Pitfalls with System Analysis

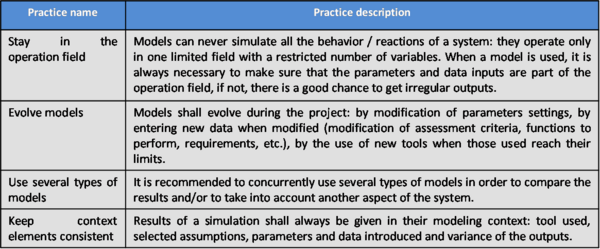

Proven practices with System Analysis are presented in Table 3.

Table 3 – Proven practices with System Analysis

References

Please make sure all references are listed alphabetically and are formatted according to the Chicago Manual of Style (15th ed). See the BKCASE Reference Guidance for additional information.

Citations

List all references cited in the article. Note: SEBoK 0.5 uses Chicago Manual of Style (15th ed). See the BKCASE Reference Guidance for additional information.

NASA. 2007. Systems engineering handbook. Washington, D.C.: National Aeronautics and Space Administration (NASA), NASA/SP-2007-6105.

ANSI/EIA. 1998. Processes for engineering a system. Philadelphia, PA, USA: American National Standards Institute (ANSI)/Electronic Industries Association (EIA), ANSI/EIA-632-1998.

Primary References

All primary references should be listed in alphabetical order. Remember to identify primary references by creating an internal link using the ‘’’reference title only’’’ (title). Please do not include version numbers in the links.

ANSI/EIA. 1998. Processes for engineering a system. Philadelphia, PA, USA: American National Standards Institute (ANSI)/Electronic Industries Association (EIA), ANSI/EIA-632-1998.

NASA. 2007. Systems engineering handbook. Washington, D.C.: National Aeronautics and Space Administration (NASA), NASA/SP-2007-6105.

Additional References

All additional references should be listed in alphabetical order.

Faisandier, A. 2011. Engineering and architecting multidisciplinary systems. (expected--not yet published).